Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

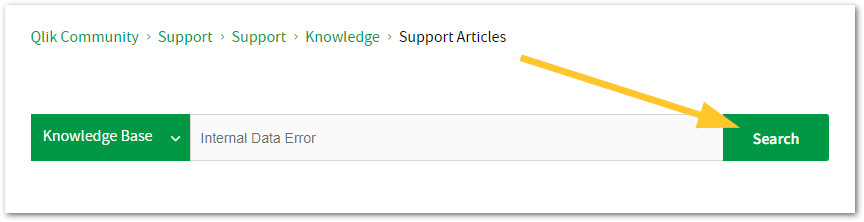

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to: qlikid.qlik.com/register

- You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in Manage Cases. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

Tabular Reporting events in the management console do not appear for all the use...

Issue reported Tabular Reporting events in the management console not showing for all the users in the tabular reporting recipient list Environment ... Show MoreIssue reported

Tabular Reporting events in the management console not showing for all the users in the tabular reporting recipient list

Environment

Resolution

When section access is used in a Qlik App, ensure to add all required recipients/users to the section access load script

For example, users in the Recipient import file should ideally match the users entered to the Section Access load script of the app.

This generally permits users to view management console details such as 'Events' assuming those user also have the necessary 'view' permissions in the tenant in which the app exists

Cause

If some of the recipients in the tabular reporting recipient list do not have access to the Space/App - they won't be considered in the task execution because they fail the governance.

ie: Recipients/users that are not added to the load script will not have access to the app nor associated management console events.

This is expected behavior.

Related Content

- Tabular reporting and section access

- URL to Events in your management console: https://yourtenant.us.qlikcloud.com/console/events

- Reviewing system events

-

DataRobot Predictions from Either Path You Choose

In my recent Getting SaaSsy with DataRobot post, I documented how to use the DataRobot Analytics Connector from within your Qlik Sense applications. I... Show MoreIn my recent Getting SaaSsy with DataRobot post, I documented how to use the DataRobot Analytics Connector from within your Qlik Sense applications. I know this will sound crazy but what if you want to make predictions on data you aren't loading into your application? Maybe you are collecting input parameters in your application from end users to play what-if games. Maybe you will record the predictions but you also want to take immediate action based on their values (ie prescriptive analytics.) Well, those things sound like perfect use cases for Qlik ApplicationAutomation.

It gets better my friends. Whether you are using a dedicated on-premise DataRobot server, a dedicated tenant or you are on the leading edge path with DataRobot's shiny new AI Cloud Manager using Paxata, Qlik Application Automation has you covered, and so do I.

In this post, I will help you identify the right DataRobot Connector Block to use for your path, help you understand how to execute predictions, and help you understand what to do with the output from the predictions.

Choose your Path

You have already chosen your DataRobot path, now it's just a matter of choosing the correct block from the DataRobot Connector. You probably would have guessed from the elaborate way I described the DataRobot choices which Qlik Application Automation block goes to which environment. But to be sure ... If you have a dedicated DataRobot server, or you have a dedicated tenant, you should use the List Prediction Explanation from Dedicated Prediction API block. If you are using the DataRobot AI Manager environment with Paxata, you should use the List Predictions block.

Oh no! What's that you are saying? You weren't told which path your organization chose, you were just given credentials and you just log in. Don't sweat it. I can help you with that. Just go to your Deployments within DataRobot, choose the Deployment you are going to execute predictions against, and choose Predictions, Prediction API and Real-time. DataRobot will provide all of the clues we need to choose the right block.

If the API URL contains App2.datarobot.com like in the first image below you are working with their AI Cloud and will need to use the List Predictions block. However, if you see a Dedicated path in your API_URL such as Qlik.orm.datarobot.com (second image) you will need to use the List Predictions fro Dedication Prediction API.

There are some other clues above as well. Notice in the second image the rest of the URL path contains api/v2/deployments, while the second image contains preApi/v1.0/deployments. It's basically DataRobot telling you which of their API's you need to utilize.

So how will that help you know for sure? One of the things that many people seldom look at with Qlik Application Automation blocks is the Description. Simply drag either/both of the blocks onto your canvas and scroll all the way down in the right panel. If you look at the List Predictions for Dedication Prediction API description you will see the following and notice it clearly indicates preApi/v1.0/deployments.

However, if you press the Show API endpoint link for the List Predictions block it will look like this. Both are dead giveaways as to the block/path you should choose.

Configuring your Connection

Regardless of which block you are using, you will first need to create a Connection for Qlik Application Automation to your DataRobot environment. If you have a Dedicated server your Connection details will look like this. Notice that you will need to copy the api_key value right from the DataRobot Deployment Details:

Your DataRobot AI Cloud connection will look similar and again you would need to copy your api_key from the deployment details. My DataRobot AI Cloud is just a "trial", hence I chose that region, while my dedicated tenant (above) is the US. The biggest difference is in the domain. Dedicated connections will be app.datarobot.com and AI Cloud connections will be app2.datarobot.com:

Choosing your Deployment

Once you test/save the connections we are ready to start making predictions. The List Predictions block is the easiest to set up so we will start with that one. Simply click the drop-down in the Deployment Id field, press "Do Lookup" and then choose the specific deployment model you are going to be making predictions against.

Then you simply provide the Input data you want to pass to the deployment to have predictions made for. More about the Prediction Data later, but for now notice that I've simply hard-coded a JSON string with field/value pairs:

The List Predictions fro Dedication Prediction API requires us to do a few more things that need to be completed. The first is the Dedicated Prediction Url. Good thing I had you bring up your deployment details because we will just copy it. Notice you do not need the HTTPS:// or the /predApi.. text, just the actual URL information.

Next will again simply click the drop-down for the Deployment ID, click Do Lookup and then choose our desired deployment.

Next, we copy the Data Robot Key from our deployment details and then we can insert our JSON block. Again, more later about that so don't panic in thinking I'm suggesting that you hand code the values you want to predict. It's just to make this section easier to navigate. 😁

Qlik Application Automation provides 4 additional parameters that are part of the DataRobot API specification. You can define the Passthrough Columns, Passthrough Columns Set, turn on Prediction Warnings and set the Decimals Number format.

You can refer directly to the DataRobot API documentation for all of the details you wish. For instance, notice that I have the Prediction Warning Enabled set to "true." Getting warnings sounded like a good idea. But alas, I ended up with an error.

Well, it turns out that in order to utilize the Prediction Warning Enabled there is work that must be done on our Deployment within DataRobot.

I guess I could have saved myself the trouble had I read the documentation. Oh well, I simply changed my default back to false so that the prediction can run.

Choosing your Prediction Input Data

Above I simply demonstrated the JSON format you need for your Prediction Data with hard-coded values. I've used my DataRobots and have predicted with a 99.99999999% confidence level that your goal in reading this isn't to hardcode 50+ input values each time you want a prediction. Instead, that data will come from somewhere else. Which is perfectly ok. Maybe you will be pulling the values from some other system as part of a workflow. When A event triggers this Qlik Application you will go do B and C and then assign output from those things to Variables that you will use as the Prediction Data. That's a great plan ... simply choose your variables, and use them where you need them in your Prediction Data. Notice I have already assigned the MasterPatientID variable and am in process of choosing the race variable below.

I'm so sorry. You don't like to use values, and you weren't doing A, B and C you were actually firing a SQL Query live based on input to your workflow and you wanted to use the data from the SQL Query. That is brilliant. Pulling the live data, when whatever event you have chosen triggers the Automation. You should write some posts. No problem, Qlik Application Automation will absolutely allow you to do that.

Or perhaps you are using a writeback solution, like Inphinity Forms, within a Qlik Sense application to capture input parameters and you wish to use those values. Do that.

Or perhaps you are ... You get the point. The Prediction Data simply needs to be a JSON block containing the field/value pairs. How you construct it, or read it from an S3 bucket, or pull it out of thin air doesn't matter. Which is the beauty of working with DataRobot within Qlik Application Automation.

Working with your Results

Woohoo, you now have a block that will execute a deployment in your DataRobot environment, regardless of which kind, and we are now ready for those wonderful predictions. Perhaps the first thing you noticed about the blocks List Predictions and List Predictions from Dedicate Prediction API was that they start with List as opposed to Get. It's of course because you may be passing a single row of data as Prediction Data or you could be passing many items in the JSON block. So these blocks are handled as lists, even if it is just a list of 1 prediction.

The DataRobot Connector for loading data into our applications simply returns the Prediction value, which is 0, in this case (the patient is not predicted to be readmitted.) However, notice below that within Qlik Application Automation either prediction block will return the Prediction as well, but it will also return a list of the Prediction values and the scores for each possible value. In my case, the 1, likely to be readmitted was scored at 0.0428973004, whereas the 0, the Prediction, was scored at .571026996.

Who cares?

Well, maybe you do. As I started this post I mentioned that perhaps we want to take action(s) based on the predictions which might be why you are making the prediction in your Qlik Application Automation workflow instead of just making the prediction in a Qlik Sense Application. If we are writing a flow that is "prescriptive" we might want to check the values. Ooooh .49999999999 vs .500000000001. Maybe that will be Action A, just email someone. While .000000001 vs .999999999 tells us that it's safe to go ahead and take the really expensive Action Z. So we might want to set up a Conditional expression.

Regardless of what we do with the values, Qlik Application Automation allows you to simply choose the values right from the block, just like it allowed you to choose Variables or data from another source.

What about Explanations?

If you don't already know me I will bring you up to speed quickly. I have very defined boundaries and am really particular about how things are worded. For example, take the phrase Data Science. Well, Science is explainable. Therefore, if something isn't explainable it isn't science. And if your predictions aren't explainable, then that isn't Data Science, it's just Data. One of the key reasons you are likely using DataRobot is the fact that it can so wonderfully return explanations for its predictions.

The Prediction of 0 above is nice. But knowing what factors led to the prediction may be just as valuable when helping us choose our prescriptive actions. Well, my friends, Qlik Application Automation has you covered for that scenario as well. In fact, you can see from the following image the block is literally raising its hand and begging you to choose it. List Prediction Explanation from Dedicated Prediction API will give you not only the Prediction, and the Prediction values but it will also return the explanations to you.

Wait something must be wrong. I see a qualitativeStrength of +++, but the second is --. What do those mean? Oh yeah, now I remember ... Qlik Application Automation is just calling the provided DataRobot API's so I might as well check the documentation from DataRobot so that I get a full and complete understanding of the input paramters I can choose for the block and understand the output values. Sure enough, it's covered.

https://docs.datarobot.com/en/docs/api/reference/predapi/pred-ref/dep-predex.html

Bonus Block

I see you out there on the leading edge doing Time Series Predictions in DataRobot. Not an issue, Qlik Application Automation has you covered with a block as well. Simply choose the List Time Series Predictions from Dedicated Prediction API and you will be good to go.

The initial inputs needed are already covered above. However, there are a few additional parameters you will need to input as well.

Of course, DataRobot has you covered with complete documentation at https://docs.datarobot.com/en/docs/api/reference/predapi/pred-ref/time-pred.html

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

Getting SaaSsy with Data Robot

Lions and Tigers and Reading and Writing Oh My -

QMC Reload Failure Despite Successful Script in Qlik Sense Nov 2023 and above

Reload fails in QMC even though script part is successfull in Qlik Sense Enterprise on Windows November 2023 and above.When you are using a NetApp bas... Show MoreReload fails in QMC even though script part is successfull in Qlik Sense Enterprise on Windows November 2023 and above.

When you are using a NetApp based storage you might see an error when trying to publish and replace or reloading a published app.In the QMC you will see that the script load itself finished successfully, but the task failed after that.

ERROR QlikServer1 System.Engine.Engine 228 43384f67-ce24-47b1-8d12-810fca589657

Domain\serviceuser QF: CopyRename exception:

Rename from \\fileserver\share\Apps\e8d5b2d8-cf7d-4406-903e-a249528b160c.new

to \\fileserver\share\Apps\ae763791-8131-4118-b8df-35650f29e6f6

failed: RenameFile failed in CopyRenameExtendedException: Type '9010' thrown in file

'C:\Jws\engine-common-ws\src\ServerPlugin\Plugins\PluginApiSupport\PluginHelpers.cpp'

in function 'ServerPlugin::PluginHelpers::ConvertAndThrow'

on line '149'. Message: 'Unknown error' and additional debug info:

'Could not replace collection

\\fileserver\share\Apps\8fa5536b-f45f-4262-842a-884936cf119c] with

[\\fileserver\share\Apps\Transactions\Qlikserver1\829A26D1-49D2-413B-AFB1-739261AA1A5E],

(genericException)'

<<< {"jsonrpc":"2.0","id":1578431,"error":{"code":9010,"parameter":

"Object move failed.","message":"Unknown error"}}ERROR Qlikserver1 06c3ab76-226a-4e25-990f-6655a965c8f3

20240218T040613.891-0500 12.1581.19.0

Command=Doc::DoSave;Result=9010;ResultText=Error: Unknown error

0 0 298317 INTERNAL&

emsp; sa_scheduler b3712cae-ff20-4443-b15b-c3e4d33ec7b4

9c1f1450-3341-4deb-bc9b-92bf9b6861cf Taskname Engine Not available

Doc::DoSave Doc::DoSave 9010 Object move failed.

06c3ab76-226a-4e25-990f-6655a965c8f3Resolution

Qlik Sense Client Managed version:

- May 2024 Initial Release

- February 2024 Patch 4

- November 2023 Patch 9

Potential workarounds

- Change the storage to a file share on a Windows server

Cause

The most plausible cause currently is that the specific engine version has issues releasing File Lock operations. We are actively investigating the root cause, but there is no fix available yet.

Internal Investigation ID(s)

QB-25096

QB-26125Environment

- Qlik Sense Enterprise on Windows November 2023 and above

-

Qlik Cloud: The authentication token associated with auto Provisioning is about ...

The Qlik Cloud Management Console displays a warning or error message, indicating that although you have stopped using SCIM, there is stil... Show MoreThe authentication token associated with auto Provisioning is about to expire

Or

The authentication token associated with auto Provisioning has expired

Resolution

The token must be deleted that was created for SCIM Auto-provisioning with Azure.

- Generate a developer API key in your SaaS platform from the profile settings, ensuring that API keys are enabled for the tenant.

- Using a tool like Postman or any other HTTP client, execute the following curl command to retrieve the API key ID associated with SCIM provisioning:

curl "https://<tenanthostname>/api/v1/api-keys?subType=externalClient" \ -H "Authorization: Bearer <dev-api-key>" -

Ensure to replace

<tenanthostname>with your actual tenant hostname and<dev-api-key>with your generated developer API key. Execute the command in Postman or a similar tool, and make sure to include the API key in the header for authorization. -

Once you have obtained the key ID from the output, copy it for later use in the deletion process.

-

To delete the API key associated with SCIM provisioning, execute the following curl command:

curl "https://<tenanthostname>/api/v1/api-keys/<keyID>" \ -X DELETE \ -H "Authorization: Bearer <dev-api-key>" - Again, replace

<tenanthostname>with your actual tenant hostname,<keyID>with the key ID you obtained in step 2, and<dev-api-key>with your developer API key. Execute this command in Postman or a similar tool, and ensure that the API key is included in the header for authorization.

By following these steps, you can successfully delete the token created for SCIM Auto-provisioning with Azure.

The information in this article is provided as-is and is to be used at your own discretion. Depending on the tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Cause

The token was not deleted when the SCIM Auto-Provisioning was disabled or completely removed.

Environment

-

The Qlik Cloud Azure Storage connector only supports MEMBER User Type Account

Using the GUEST user type when creating an Azure storage account in the Azure portal leads to an error after successful authentication from Qlik. The... Show MoreUsing the GUEST user type when creating an Azure storage account in the Azure portal leads to an error after successful authentication from Qlik.

The remote server returned an error: (400) Bad Request.

Resolution

Use a MEMBER account. Authentication with a GUEST user type is not supported for connecting from Qlik Cloud to Azure.

Related Content

Properties of a Microsoft Entra B2B collaboration user

Permission differences between member users and guest users

Configuring Azure Blob Storage in Qlik CloudEnvironment

- Qlik Cloud

- Azure Storage Connector

-

Qlik Sense QRS API using Xrfkey header in PowerShell

Qlik Sense Repository Service API (QRS API) contains all data and configuration information for a Qlik Sense site. The data is normally added and upd... Show More

Qlik Sense Repository Service API (QRS API) contains all data and configuration information for a Qlik Sense site. The data is normally added and updated using the Qlik Management Console (QMC) or a Qlik Sense client, but it is also possible to communicate directly with the QRS using its API. This enables the automation of a range of tasks, for example:- Start tasks from an external scheduling tool

- Change license configurations

- Extract data about the system

Using Xrfkey header

A common vulnerability in web clients is cross-site request forgery, which lets an attacker impersonate a user when accessing a system. Thus we use the Xrfkey to prevent that, without Xrfkey being set in the URL the server will send back a message saying: XSRF prevention check failed. Possible XSRF discovered.

Environments:Note: Please note that this example is related to token-based licenses and in case this is needed to be configured with Professional Analyser type of licenses you might need to use the following API calls:

- /qrs/license/professionalaccesstype/full

- /qrs/license/analyzeraccesstype/full

Furthermore, combining this with QlikCli and in case you need to monitor and more specifically remove users, the following link from community might be useful: Deallocation of Qlik Sense License

Resolution:

This procedure has been tested in a range of Qlik Sense Enterprise on Windows versions.- PowerShell 3.0 or higher (Installed by default in Windows 8 / Windows Server 2012 and later)

- Make sure the Qlik Repository service is up and running and port 4242 is open on the target server

Method 1: Authenticating through Qlik Proxy Service

- Go to PowerShell ISE and paste the following script

- In this example we are sending a GET request with a header of Xrfkey=12345678qwertyui and we are addressing the end point of /about. For more details on all end points, please refer to Connecting to the QRS API

$hdrs = @{} $hdrs.Add("X-Qlik-xrfkey","12345678qwertyui") $url = "https://qlikserver1.domain.local/qrs/about?xrfkey=12345678qwertyui" Invoke-RestMethod -Uri $url -Method Get -Headers $hdrs -UseDefaultCredentialsMethod 2: Use certificate and send direct request to Repository API

- Open Qlik Management Console and export the certificate. Please refer to Export client certificate and root certificate to make API calls with Postman for procedure.

- Make sure that port 4242 is open between the machine making the API call and the Qlik Sense server.

- Import the certificate on the machine you will use to make API calls. This must be imported in the personal certificate store of your user in MMC. The following PowerShell script is fetching automatically the Qlik Client certificate from the Certificate Personal store for the current user. You may need to modify the script if you have QlikClient certificates imported from different Qlik Sense servers in the store)

- Paste the below script in PowerShell ISE:

$hdrs = @{} $hdrs.Add("X-Qlik-xrfkey","12345678qwertyui") $hdrs.Add("X-Qlik-User","UserDirectory=DOMAIN;UserId=Administrator") $cert = Get-ChildItem -Path "Cert:\CurrentUser\My" | Where {$_.Subject -like '*QlikClient*'} $url = "https://qlikserver1.domain.local:4242/qrs/about?xrfkey=12345678qwertyui" Invoke-RestMethod -Uri $url -Method Get -Headers $hdrs -Certificate $cert

Execute the command.

A possible response for the 2 above scripts may look like this (Note that the JSON string is automatically converted to a PSCustomObject by PowerShell) :

buildVersion : 23.11.2.0 buildDate : 9/20/2013 10:09:00 AM databaseProvider : Devart.Data.PostgreSql nodeType : 1 sharedPersistence : True requiresBootstrap : False singleNodeOnly : False schemaPath : About

Related and advanced Content:

If there are several certificates from different Qlik Sense server, these can not be fetched by subject as there will have several certificates with subject QlikClient and that script will fail as it will return as array of certificates instead of a single certificate. In that case, fetch the certificate by thumbprint. This required more Powershell knowledge, but an example can be found here: How to find certificates by thumbprint or name with powershell

-

Scheduler: No Worker Scheduler Found

Scenario : Single node All services installed, up and running Possible Error messages : Unhandled exception: Failed to access performance counter d... Show More

Scenario :- Single node

- All services installed, up and running

Possible Error messages :- Unhandled exception: Failed to access performance counter data. Make sure that performance counters are enabled or try rebuilding them with lodctr /R.

- Category does not exist.

- Could not reserve an executor for task: No node found for task(name/id)

- Failed to send alive notification to manager.

- Could not obtain the current CPU load.

- Worker-node XXXX is not alive and cannot run task YYYYYY

Environment:

- Qlik Sense Enterprise on Windows , all versions

- Windows 2012 R2 datacenter edition

Resolution:

Steps for resolution :1. Rebuild the performance counters (lodctr /R)

Tasks do not reload after upgrade to Qlik Sense June 2018

2. Disable the loopback check

Reopen Registry and navigate to HKEY_LM\System\CCS\Control\LSA\MSV1.0 and create the following DWORD key

DisableLoopbackCheck

Value : 13. Then, reboot the box

Related Content:

-

Critical Security fixes for Qlik Sense Enterprise for Windows (CVE-pending)

Executive Summary A security issue in Qlik Sense Enterprise for Windows has been identified, and patches have been made available. If successfully ex... Show MoreExecutive Summary

A security issue in Qlik Sense Enterprise for Windows has been identified, and patches have been made available. If successfully exploited, this vulnerability could lead to a compromise of the server running the Qlik Sense software, including remote code execution (RCE).

This issue was responsibly disclosed to Qlik and no reports of it being maliciously exploited have been received.

Affected Software

All versions of Qlik Sense Enterprise for Windows prior to and including these releases are impacted:

- February 2024 Patch 3

- November 2023 Patch 8

- August 2023 Patch 13

- May 2023 Patch 15

- February 2023 Patch 13

- November 2022 Patch 13

- August 2022 Patch 16

- May 2022 Patch 17

Severity Rating

Using the CVSS V3.1 scoring system (https://nvd.nist.gov/vuln-metrics/cvss), Qlik rates this severity as high.

Vulnerability Details

CVE-2024-xxxx (QB-26216) Privilege escalation for authenticated/anonymous user

Severity: CVSS:3.1/AV:N/AC:L/PR:L/UI:N/S:U/C:H/I:H/A:H 8.8 (High)

Due to improper input validation, a remote attacker with existing privileges is able to elevate them to the internal system role, which in turns allows them to execute commands on the server.

Resolution

Recommendation

Customers should upgrade Qlik Sense Enterprise for Windows to a version containing fixes for these issues. Fixes are available for the following versions:

- May 2024 Initial Release

- February 2024 Patch 4

- November 2023 Patch 9

- August 2023 Patch 14

- May 2023 Patch 16

- February 2023 Patch 14

- November 2022 Patch 14

- August 2022 Patch 17

- May 2022 Patch 18

All Qlik software can be downloaded from our official Qlik Download page (customer login required).

Credit

This issue was identified and responsibly reported to Qlik by Daniel Zajork.

-

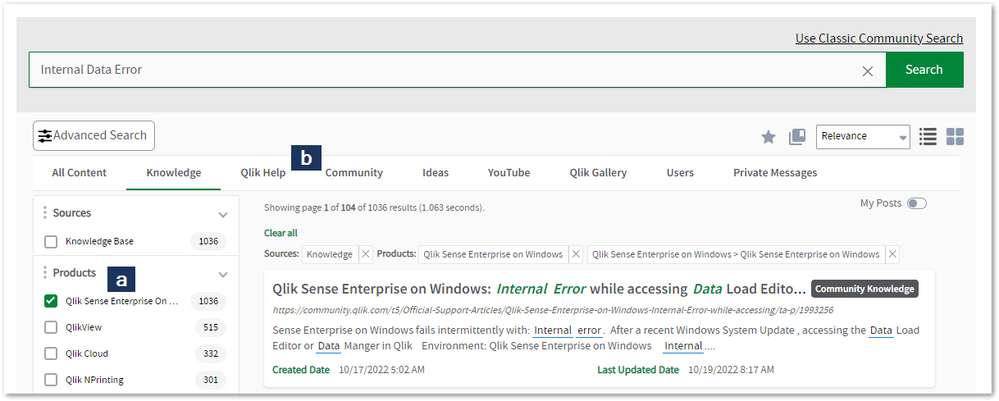

Concurrent Reload Settings in Qlik Sense Enterprise

The max concurrent reloads can be configured in the Qlik Sense Management Console. Open the Qlik Sense Management Console Navigate to Schedulers Ena... Show MoreThe max concurrent reloads can be configured in the Qlik Sense Management Console.

- Open the Qlik Sense Management Console

- Navigate to Schedulers

- Enable the Advanced section (if not already on by default)

- Set the required max concurrent reloads

IMPORTANT TO REMEMBER

- A reload node could run NumberOfCores - 2

- If for example, the reload node has 8 CPU Cores then it could possibly run 6 concurrent reloads at its maximum.

- A reload task typically consumes at least one core, sometimes more

- With more concurrent tasks running than there are number of cores, the total time to complete a number of tasks that is larger than the number of cores does not improve with more parallel tasks running due to starvation of the server

- Each reload running is loaded into RAM. This means that the average RAM footprint grows with more parallel reloads

- With more parallel reloads than cores the probability for total starvation increases since the QIX engine is CPU hungry and other processes (for example, the operating system) might not get enough CPU cycles to perform their duties

- Use the guideline, NumberOfCPUCores - 2 in performing capacity planning on the possible amount of concurrent scheduled reloads.

- Scheduled reloads are queued, however, if not enough resources in the system

- To validate that environment needs proper capacity planning, go to C:\ProgramData\Qlik\Sense\Log\Scheduler\Trace\<Server>_System_Scheduler.txt, an example log shows below:

<ServerName>_System_Scheduler.txt

Domain\qvservice Engine connection released. 5 of 4 used

Domain\qvservice Engine connection 6 of 4 established

Domain\qvservice Request for engine-connection dequeued. Total in queue: 25

Concurrent settings

Use the "Max concurrent reloads" to limit the maximal concurrent tasks can be run at same time on current node. By default, it's set to 4, which means only 4 tasks can be run at same time on this node.

When the 5th task comes in:- It will be queued by sequence.

- The queue has a time setting, which will eliminate (erase) a queued task if the timeout limit reaches. By default, it's set to 30 mins, in this case, if none of the first 4 tasks finishes in 30 minutes after the 5th task comes in, then the 5th task will be cancelled due to timeout. It has to be triggered again (manually or next scheduled time slot).

- Once one of the 4 running tasks finishes, the 5th task will be executed on this node if it's not timed out.

- If you set timeout to 0, this will make the queue never timeout.

- It's suggested not to run more than 10 concurrent reloads at once, unless the schedular node has an extreme amount of resources dedicated to it.

- If there is low memory or cores available on the node, then some tasks may kick off however take several hours to complete. Adding resources to the node running the reloads is suggested.

Multi-node deployment

On a multi-node deployment, tasks will be balanced from the manager node to any node(s) designated as workers.

It's highly advised to check if the central node configured is set to Manager and Worker or Manager. When set to Manager, it will send all reload jobs to the reload/scheduler nodes, as it should. However if a central node is set to Manager and Worker, this means the Central node will also be involved in performing reloads. This is not recommended.The work flow looks as follows:

- Manager node receives a new task execution request.

- Manager node checks the resource availability on each of the worker nodes.

- Manager node assigns this task to the node with the lowest number of running tasks per "Max concurrent reloads" setting.

The improvement to track the Max concurrent reloads can, if desired, be disabled. This reverts Sense to an older load balancing method that relies only on CPU usage.

To disable the setting:

- Open the Scheduler.exe.config, which by default is located in: C:\Program Files\Qlik\Sense\Scheduler\Scheduler.exe.config

- Set DisableLegacyLoadBalancingBehavior setting to false

- Restart Qlik Sense Scheduler Service

- Repeat these actions on each node of the cluster running the Qlik Sense Scheduler Service

Example

In our example, we allow one concurrent reload, but we assume that two reloads are executed at the same time.

- If Task A is executed first, Task B is queued.

- If Task A is executed first and then fails, Task B is executed after the failure.

- After Task A's failure, Task A is queued and will execute after Task B has finished.

-

Qlik Replicate: Linux Installation warning: Header V4 RSA/SHA256 Signature, key ...

When installing Qlik Replicate v2023.5 on RHEL/CentOS v8.x, you may encounter a warning message from rpm as follows: warning: areplicate-2023.5.0-213.... Show MoreWhen installing Qlik Replicate v2023.5 on RHEL/CentOS v8.x, you may encounter a warning message from rpm as follows:

warning: areplicate-2023.5.0-213.x86_64.rpm: Header V4 RSA/SHA256 Signature, key ID 05d7eace: NOKEY

This warning message indicates that rpm is unable to verify the package signature due to the absence of the public key.

Resolution

Please perform the following commands before trying to install the RPM package:

-

$ rpm -q gpg-pubkey --qf '%{version}-%{release} %{summary}\n' | sed '/qlik.com/!d;s/ .*$//' | xargs -n 1 -I {} sudo rpm -e gpg-pubkey-{} -

$ curl https://qlikcloud.com/.well-known/qlik-codesign-public-keys.asc > qlik-codesign-public-keys.asc

-

$ sudo rpm --import qlik-codesign-public-keys.asc

-

$ rpm --checksig <qlik-rpm-package>

Where the commands do the following:

- Removes previously installed Qlik code signing public keys (optional but recommended to allow expired keys to be removed)

- Fetches the current Qlik code-signing public keys

- Imports the current public keys into RPM

- Verifies the authenticity of the desired Qlik RPM package

Here is an example of an RPM package that passed the authenticity check:

$ rpm --checksig areplicate-2023.5.0-152.x86_64.rpm

areplicate-2023.5.0-152.x86_64.rpm: digests SIGNATURES OKAn example of failed check is:

$ rpm --checksig areplicate-2023.5.0-152.x86_64.rpm

areplicate-2023.5.0-152.x86_64.rpm: digests SIGNATURES NOT OK

Then proceed with the installation or upgrade as per the instructions in the user guide.Environment

Qlik Replicate v2023.5, v2023.11 or after on Linux 8.x (64-bit)

-

-

Qlik Sense Custom Themes Causing NPrinting Report Generation Hang Behavior

Issue NPrinting report is hanging on preview or in a publish task preventing the report from generating as expected. In many cases with 'Carded' Qlik ... Show MoreIssue

NPrinting report is hanging on preview or in a publish task preventing the report from generating as expected. In many cases with 'Carded' Qlik Sense themes, we are seeing this problem.

This is also seen when the extended sheet feature is used

Related NP log error

"error=task SENSE_JS_PAINT_COVER_POINTS_TIMEOUT"Resolution

There is currently a fix coming to resolve 'carded' custom themes however this fix is still under investigation.

Information provided on this defect is given as is at the time of documenting. For up to date information, please review the most recent Release Notes, or contact support with the QB-24997 for reference.

Workaround:

Possible workarounds for this are:

- Disable "Apply Sense app theme" in NPrinting connection definition.

- Reduce the size of any Qlik Sense extended sheets so that they are within default sheet size dimensions (In this point, you may test with and without the "Apply Sense app theme" inside the NPrinting connection settings).

NOTE: Extended or Custom sheet sizes are not fully supported. See Qlik Help page below for details

Qlik Sense custom and extended sheets

- Qlik Sense custom size sheets and extended sheet features will not be maintained on export.

Cause

- Product Defect ID: QB-24997

- Carded Themes

- Extended Sheets

Environment

- Qlik NPrinting Supported Versions

- Qlik Sense versions affected to be determined and resolved as per QB-24997

-

Qlik Talend Studio: Job fails with error "Unrecognized option: --add-opens"

A Job fails to run on a remote JobServer with the following error message: Unrecognized option: --add-opens=java.base/java.lang=ALL-UNNAMEDError: Coul... Show MoreA Job fails to run on a remote JobServer with the following error message:

Unrecognized option: --add-opens=java.base/java.lang=ALL-UNNAMED

Error: Could not create the Java Virtual Machine.

Error: A fatal exception has occurred. Program will exit.Cause

The issue occurs with Talend Remote Engine or Talend JobServer using Java 8, but Talend Studio has JDK 17 enabled. The --add-opens option is only supported in java 11 and above, Java 8 does not support it.

If the 'Enable Java 17 compatibility' option is enabled, the Jobs built by Talend Studio will have the --add-opens parameters in the job.sh or job.bat script files, such as:

JAVA_OPTS=-Dfile.encoding=UTF-8 -Dsun.stdout.encoding=UTF-8 -Dsun.stderr.encoding=UTF-8 -Dconsole.encoding=UTF-8 --add-opens=java.base/java.lang=ALL-UNNAMEDThe Jobs built this way cannot be executed with Java 8.

Resolution

Option 1:

- Click

on the toolbar of Talend Studio main window, or click File > Edit Project Properties from the menu bar to open the Project Settings dialog box.

- Expand the Build node and click Java Version to open the corresponding view.

- Deactivate the 'Enable Java 17 compatibility' option.

- Re-build the Job and re-publish it to Talend Remote Engine or Talend JobServer.

Option 2:

From R2024-05, Java 17 will become the only supported version to start most Talend modules, If you want to keep the "Enable JAVA 17 Compatibility" option enabled, make sure that Talend Remote Engine or Talend JobServer also uses JDK 17 to execute jobs.

Related Content

Setting-compiler-compliance-level

Environment

- Click

-

Why do I get a Invalid Vizualization error in my Qlik Apps?

A Qlik app may show an Incomplete Visualization or Invalid Visualization error. This article covers the most common root causes. Resolution Check fo... Show MoreA Qlik app may show an Incomplete Visualization or Invalid Visualization error. This article covers the most common root causes.

Resolution

- Check for and fix incomplete chart dimensions or measures and also check for NULL values.

- Update your visualization bundles:

- These bundles must be the same version as the installed Qlik Sense version. A bundle can be copied from a different Qlik Sense server so long as it is the same Qlik version and patch of the Target Qlik Server which needs its bundle updated

- If supported 3rd party bundles, check with your vendor and upload a supported and current bundle version

- Use supported Native Qlik Bundles

Cause

Incomplete Visualizations:

- The chart may have invalid dimensions or measures due to recent changes by a developer

Invalid Visualizations:

- Wrong bundle version uploaded to the current Qlik Sense server, perhaps from an older Qlik Sense server

- Unsupported or outdated 3rd party bundles

- Incorrectly developed custom visualization bundle

Related Content

- Visualization bundles

- Dashboard bundles

- Installing, importing and exporting your visualizations

- Creating and editing visualizations

- Troubleshooting - Creating visualizations

- Null values in visualizations

The information in this article is provided as-is and will be used at your discretion. Depending on the tool(s) used, customization(s), and/or other factors, ongoing support on the solution below may not be provided by Qlik Support.

-

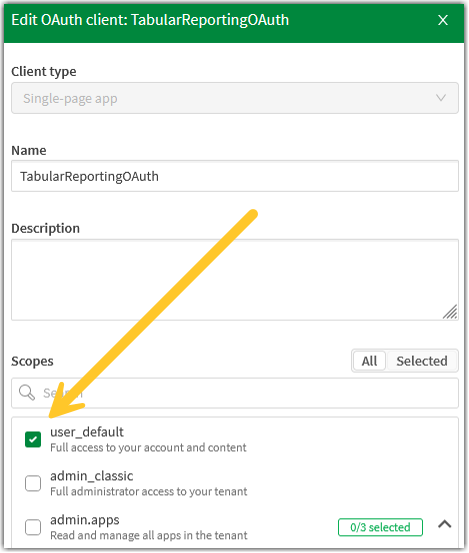

Tabular Reporting OAUTH Error Status 400 resolution

This article describes how to resolve the OAUTH Error Status 400 error preventing successful configuration and download capability of the MS Office ad... Show MoreThis article describes how to resolve the OAUTH Error Status 400 error preventing successful configuration and download capability of the MS Office addin manifest necessary for Tabular Reporting.

{"errors":[{"title":"Invalid redirect_uri","detail":"redirect_uri is not registered","code":"OAUTH-1","status":"400"}],"traceId":"0000000000000000xxxxxxxxxxxxxxxx"}

Resolution

An OAuth client configuration is required to install the Qlik add-in for Microsoft Excel. The add-in is used by report developers to prepare report templates which control output of tabular reports from the Qlik Sense app.

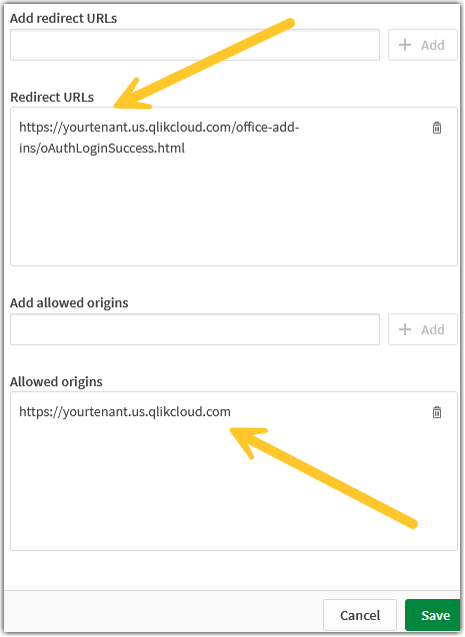

Review or re-do the steps documented in Creating an OAuth client for the Qlik add-in for Microsoft Excel.

Verify you have included the user_default scope.

Example of a working OAUTH configuration:

Cause

The OAUTH configuration was not set correctly, such as missing the user_default scope, or not setting up the redirect link.

Related Content

Preparing and obtaining the add-in manifest

Qlik Tabular Reporting General Troubleshooting and Best Practices

Tabular Reporting available in Qlik Cloud Enterprise and Premium editions. See for Product Description for Qlik Cloud® Subscriptions for details. *Not included Qlik Cloud Standard and Government Editions.

Environment

-

Qlik Replicate SAP ODP CDS View not processing Updates done in SAP

When replicating a CDS View the Updates are being processed as Inserts not Updates. In SAP the CDS views delta with UPSERT and SAP capture INSERT and ... Show MoreWhen replicating a CDS View the Updates are being processed as Inserts not Updates. In SAP the CDS views delta with UPSERT and SAP capture INSERT and UPDATE both as one operation which is treated as INSERT.

If you have a SAP login you can look up SAP Note 3300238 for more information as shown below:

SAP Note 3300238 - ABAP CDS CDC: ODQ_CHANGEMODE not showing proper status forcreation

Component: BW-WHM-DBA-ODA (SAP Business Warehouse > Data Warehouse Management > Data Basis >Operational Data Provider for ABAP CDS, HANA & BW), Version: 4, Released On: 19.01.2024Resolution

This is working as expected. It is the designed behavior of the CDC logic. For both insert and update, ODQ_CHANGEMODE = U and ODQ_ENTITYCNTR = 1.

The CDC-delta logic is designed as UPSERT-logic. This means a DB-INSERT (or create) or a DB-UPDATE both get the ODQ_CHANGEMODE = U and ODQ_ENTITYCNTR = 1. It's not possible to distinguish in CDC-delta between Create and Update.

Environment

Qlik Replicate

SAP S/4HANA

SAP BW/4HANA -

Aliases are added to the field names when loading from Cloudera with Data Gatewa...

The problem occurs when running a reload from Data Gateway to a Cloudera DB (Cloudera Hive). The reload is completed correctly, but the fields are imp... Show MoreThe problem occurs when running a reload from Data Gateway to a Cloudera DB (Cloudera Hive).

The reload is completed correctly, but the fields are imported with aliases. This means that the reload imports the fields as 'TableName.FieldName' instead of importing them as just 'FieldName'.

For example, with this script:

LOAD meter_id,

Field1,

Field2,

Field3;[meter_attribute]:

SELECT "meter_id",

"Field1",

"Field2",

"Field3"

FROM internaldb."meter_attribute";Fields are imported as: meter_attribute.Field1, meter_attribute.Field2, meter_attribute.Field3.

Resolution

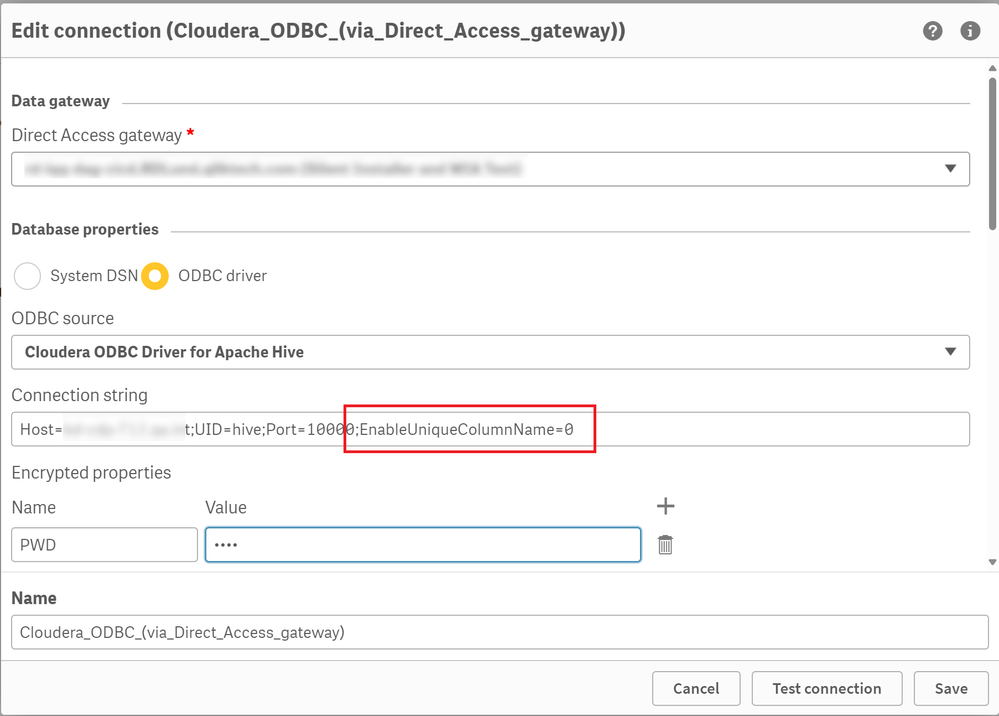

The problem can be fixed adding the row EnableUniqueColumnName=0 to the connection string.

- Edit your Cloudera ODBC connection

- Locate Connection string

- Add EnableUniqueColumnName=0, separating it with a semicolon (;) from the previous parameters:

Additional Notes:

- It is not necessary to select the "Allow non-SELECT queries" option in the connector.

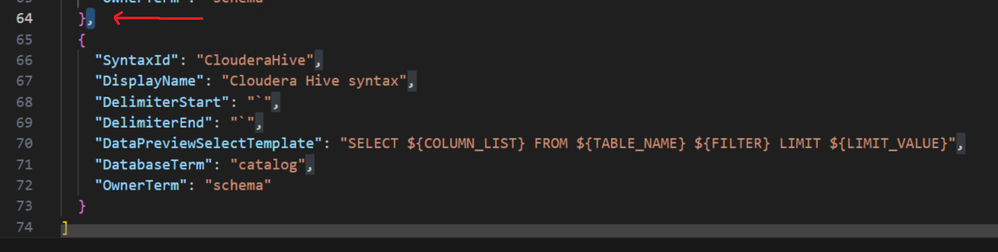

- In order to make Data Preview work reliably with any new Cloudera connections, we recommend adding the following syntax to its syntax.json file:

- Open C:\ProgramData\Qlik\Gateway\genericodbc_database_syntax.json in a text editor with elevated permissions (administrator)

- Add:

{ "SyntaxId": "ClouderaHive", "DisplayName": "Cloudera Hive syntax", "DelimiterStart": "`", "DelimiterEnd": "`", "DataPreviewSelectTemplate": "SELECT ${COLUMN_LIST} FROM ${TABLE_NAME} ${FILTER} LIMIT ${LIMIT_VALUE}", "DatabaseTerm": "catalog", "OwnerTerm": "schema" } - Restart the Data Gateway service.

This snippet re-configures all the custom syntax settings for the driver.

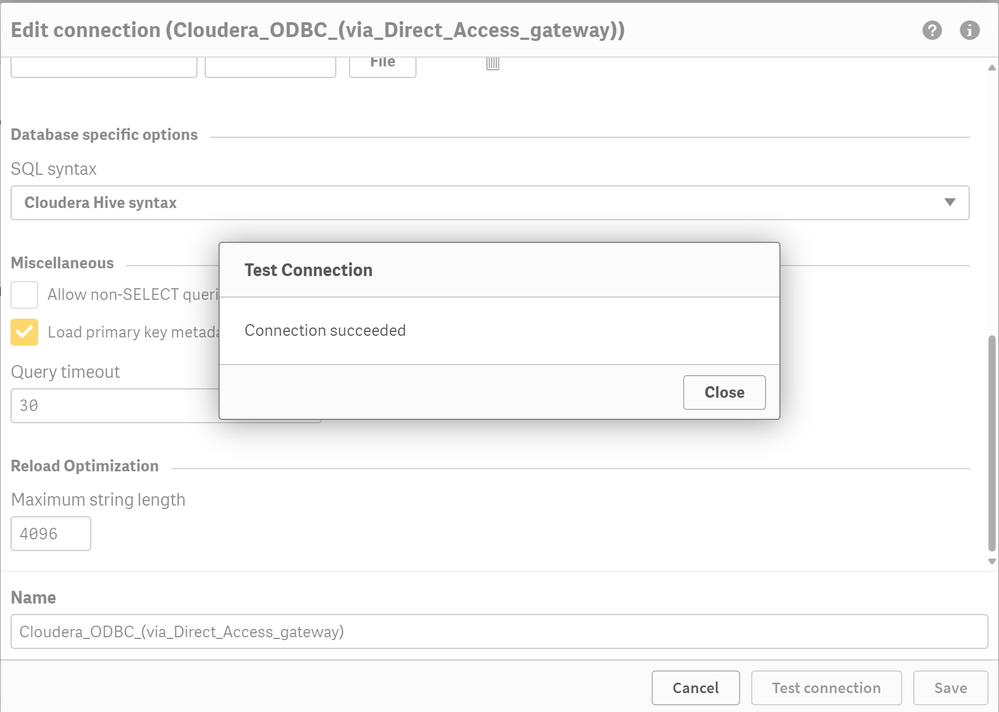

Please, notice that the block must have a comma (,) either immediately before or after it as shown in the screenshot below. - Once restarted, open the data connection editor again and the "Cloudera Hive syntax" option should appear, as in this image:

- Open C:\ProgramData\Qlik\Gateway\genericodbc_database_syntax.json in a text editor with elevated permissions (administrator)

Internal Investigation ID

QB-26342

Environment

- Qlik Cloud

- Qlik Data Gateway Direct Access all versions

-

Using Kafka with Qlik Replicate

This Techspert Talks session covers: How Replicate works with Kafka Kafka Terminology Configuration best practices Chapters: 01:06 - Kafka Archit... Show More -

How to: Getting started with the Amazon SNS connector in Qlik Application Automa...

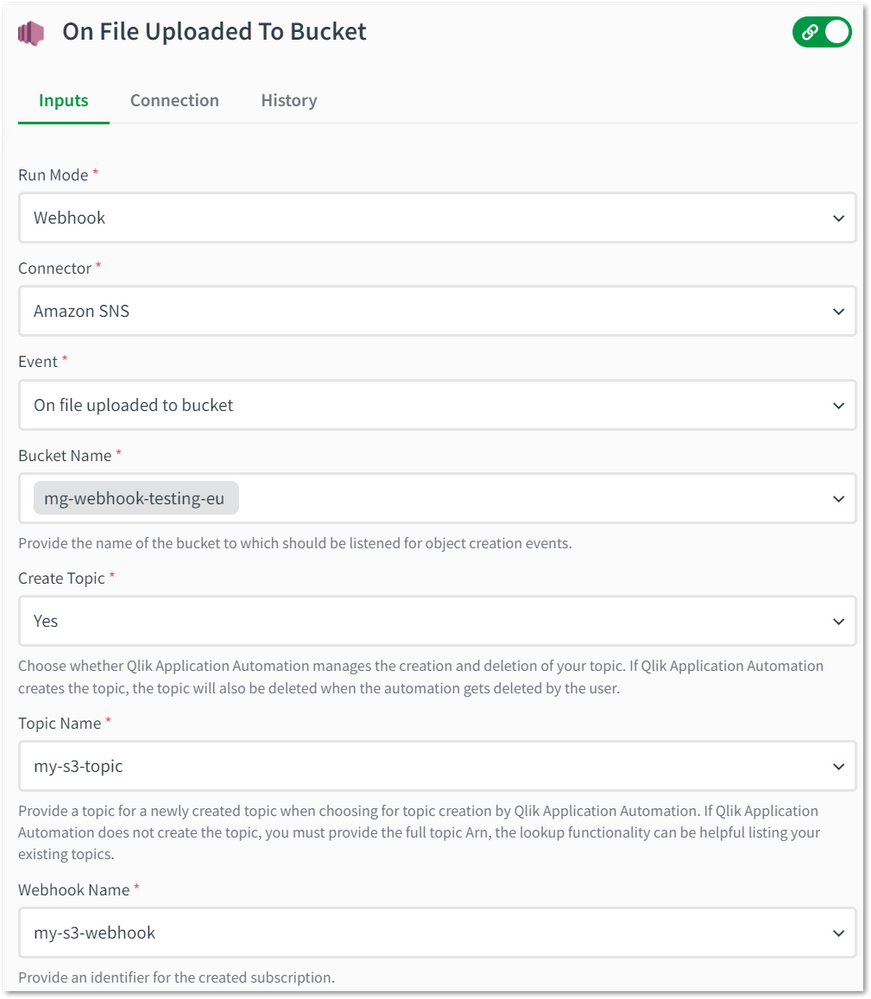

This article explains how the Amazon SNS connector in Qlik Application Automations can be used to set up webhooks that trigger when a object creation ... Show MoreThis article explains how the Amazon SNS connector in Qlik Application Automations can be used to set up webhooks that trigger when a object creation event occurs in Amazon S3. This connector only has webhooks available.

Content:

How to connect

Search for the "Amazon SNS" connector in Qlik Application Automations. When you click connect, you will be prompted for the following input parameters:

- Aws_access_key_id: This is the access key you obtain from AWS.

- Aws_secret_access_key: This is the secret key you obtain from AWS.

- Region: Your AWS region where your resources are situated.

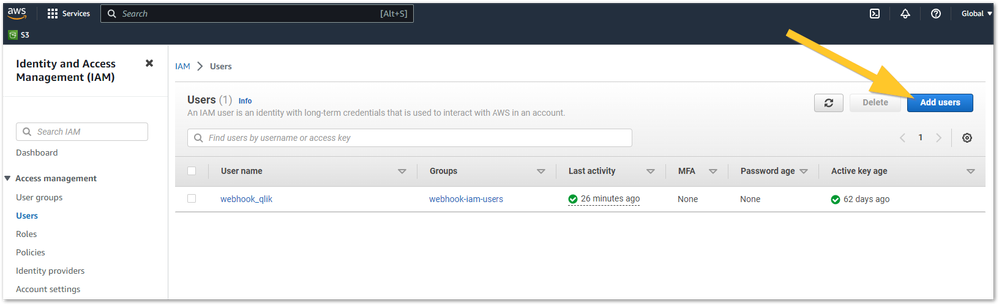

You must obtain the AWS Access Key from IAM in your AWS console. This can be obtained by going to the IAM section in AWS and in the left side panel choose users.

Here you can choose either for an already existing user or creating a new one by clicking the "Add Users" button in the topright.

When you create a new user, you must provide a user name and click next. You do not need to give this user access to the AWS console. In the next step, you will give permissions to do this IAM user.

The following policy needs to be created and attached to the IAM user, replace the account-id with your account ID:

Other permissions that are suggested to add are:

- s3:ListBucket

- s3:ListAllMyBuckets

- sns:ListTopics

Furthermore, the IAM user must be made an owner of a S3 bucket when creating a notification configuration.

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "s3:PutBucketNotification", "iam:PassRole", "sns:Publish", "sns:CreateTopic", "sns:Subscribe" ], "Resource": [ "arn:aws:s3:::*", "arn:aws:iam::account-id:role/*", "arn:aws:sns:*:account-id:*" ] }, { "Sid": "VisualEditor1", "Effect": "Allow", "Action": "sns:Unsubscribe", "Resource": "*" } ] }You will have to create an access key for the IAM user.

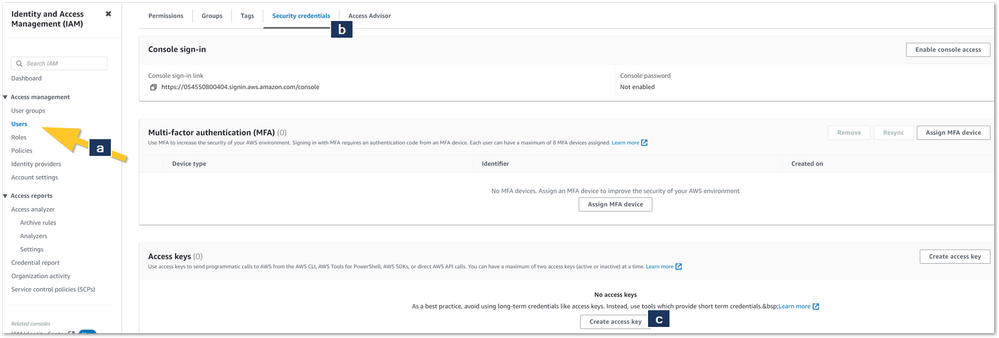

This can be done in the (a) Users menu and in the (b) Security credentials tab. Click (c) Create access key.

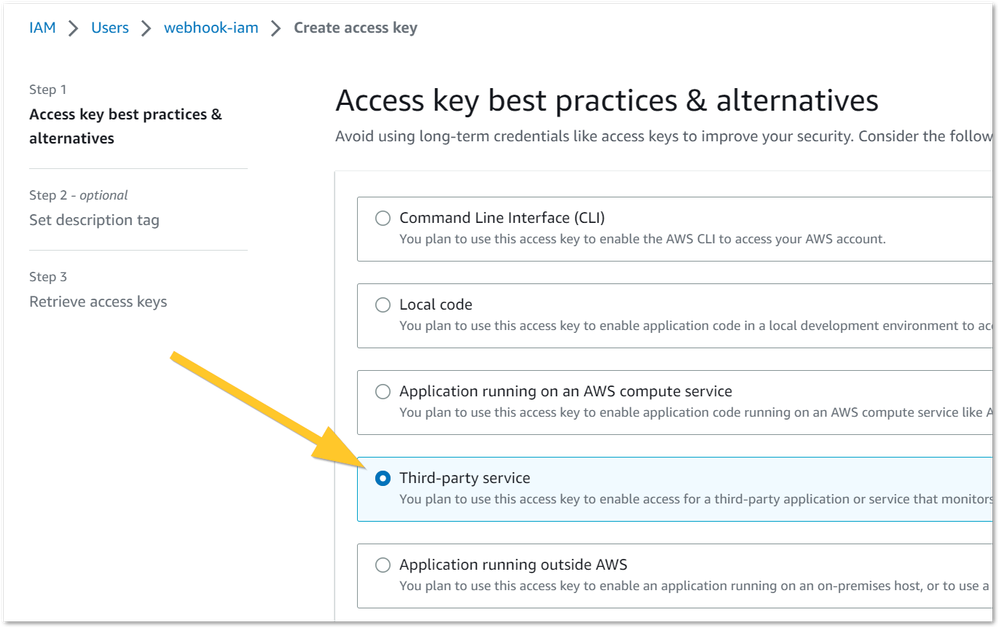

Choose Third-party service and choose to understand the above recommendation, click next:

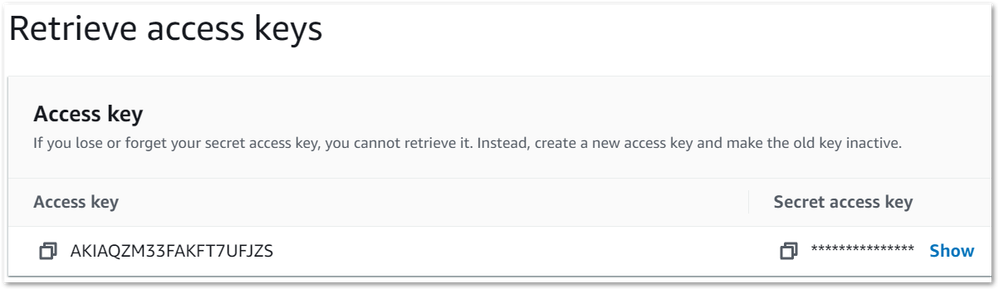

You will now have your access key and secret key and can finish creating the datasource in Qlik Application Automation:

Using this in an automation

You can use this in an automation, but only as a webhook. When you create a new automation, you will be presented with a blank canvas. Select the Start block and change the run mode to webhook.

Choose an event type next. These are currently limited to S3 object creation events. You will have lookup capabilities available to other parameters, such as bucket and topic selection:

After saving the automation, you can test the webhook by uploading objects in your S3 bucket and see in your automation run history that it is triggering this automation.

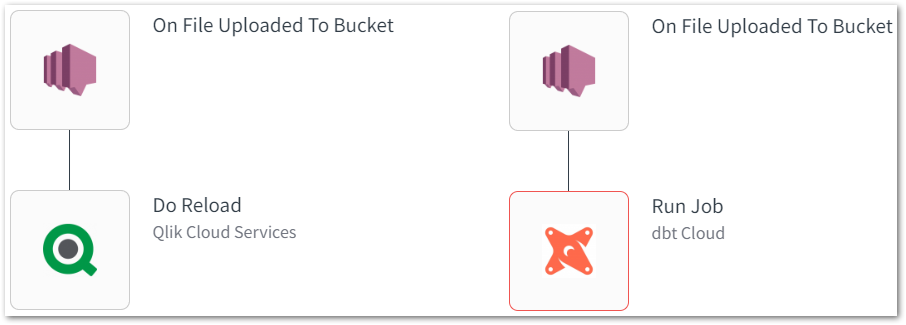

The use of this is that you can now trigger tasks after a object is uploaded to S3. Common tasks will be to reload a Qlik Sense app or trigger a data pipeline in any of our other connectors:

-

Qlik Cloud SMTP Configuration Error: "Email Provider is not working as expected"

If a tenant previously had an incomplete SMTP configuration with their Qlik Cloud, an error message will now be shown to the Tenant Administrator: Yo... Show MoreIf a tenant previously had an incomplete SMTP configuration with their Qlik Cloud, an error message will now be shown to the Tenant Administrator:

- Your email providers is not working – Go to Settings

Resolution

To resolve this error a Tenant Admin can enter valid SMTP credentials.

At the moment, it is not possible to delete/clear the previous credential entry in the authentication. An option to clear the credentials is being prepared to support a return to a default (non-configured/empty) state and is expected to be available in the coming weeks.We will update this article when the ability to clear becomes available.

Example SMTP Error

Cause

With the release of the SMTP service connectivity for Microsoft O365 from the Management Console, more stringent error checking was added to the basic authentication configuration.

Related Content

If interested, Admins can still successfully connect to Microsoft 0365 SMTP with this error showing. More details on the new available 0Auth2 authentication can be found here: Qlik Cloud: Introducing OAuth2 authentication for ... - Qlik Community - 2444243

Internal Investigation ID

- QB-26792

Environment

- Qlik Cloud

-

Splitting up GeoAnalytics connector operations

Many of the GeoAnalytics can be executed with a split input of the indata table. This article explains which and how to modify the code that the conne... Show MoreMany of the GeoAnalytics can be executed with a split input of the indata table. This article explains which and how to modify the code that the connector produces. Operations that cannot be Splittable are mostly the aggregating and hence not Splittable. When loadable tables are used for input, inline tables are created in loops and can be used for a quick way to split. Of course it's possible to write custom code to do the splitting instead.

Making calls with large indata tables often causes time outs on the server side, splitting is a way around that.

Environment:

Splittable ops Non-Splittable ops Special ops, splittable - AddressPointLookup

- Intersects

- IpLookup

- NamedAreaLookup

- NamedPointLookup

- PointToAddressLookup

- Routes

- TravelAreas

- Within

- Closest

- Cluster

- Dissolve

- Load

- IntersectsMost

- Simplify

- Binning

-

SpatialIndex

Binning and SpatialIndex

Bining and SpatialIndex differs from other operations, they are not placing any call to the server if the indata are internal geometries, ie lat ,ong points. The operations als produce the same type of results so the resulting tables can be concatenated.

Resolution:

Example, a Within operation

Before the edit

The code as the connector produces it:

/* Generated by Idevio GeoAnalytics for operation Within ---------------------- */ Let [EnclosedInlineTable] = 'POSTCODE' & Chr(9) & 'Postal.Latitude' & Chr(9) & 'Postal.Longitude'; Let numRows = NoOfRows('PostalData'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'POSTCODE', 'Postal.Latitude', 'Postal.Longitude' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'PostalData'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosedInlineTable] = [EnclosedInlineTable] & chunkText; Next chunkText='' Let [EnclosingInlineTable] = 'ClubCode' & Chr(9) & 'Car5mins_TravelArea'; Let numRows = NoOfRows('TravelAreas5'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'ClubCode', 'Car5mins_TravelArea' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'TravelAreas5'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosingInlineTable] = [EnclosingInlineTable] & chunkText; Next chunkText='' [WithinAssociations]: SQL SELECT [POSTCODE], [ClubCode] FROM Within(enclosed='Enclosed', enclosing='Enclosing') DATASOURCE Enclosed INLINE tableName='PostalData', tableFields='POSTCODE,Postal.Latitude,Postal.Longitude', geometryType='POINTLATLON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosedInlineTable)} DATASOURCE Enclosing INLINE tableName='TravelAreas5', tableFields='ClubCode,Car5mins_TravelArea', geometryType='POLYGON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosingInlineTable)} SELECT [POSTCODE], [Enclosed_Geometry] FROM Enclosed SELECT [ClubCode], [Car5mins_TravelArea] FROM Enclosing; [EnclosedInlineTable] = ''; [EnclosingInlineTable] = ''; /* End Idevio GeoAnalytics operation Within ----------------------------------- */After edit

The header and the call is moved inside of the loop. chunkSize decides how big each split is.

Note that the first inline table now comes after the first one, this to get the call inside of the iteration.

/* Generated by Idevio GeoAnalytics for operation Within ---------------------- */ Let [EnclosingInlineTable] = 'ClubCode' & Chr(9) & 'Car5mins_TravelArea'; Let numRows = NoOfRows('TravelAreas5'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'ClubCode', 'Car5mins_TravelArea' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'TravelAreas5'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosingInlineTable] = [EnclosingInlineTable] & chunkText; Next chunkText='' Let numRows = NoOfRows('PostalData'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let [EnclosedInlineTable] = 'POSTCODE' & Chr(9) & 'Postal.Latitude' & Chr(9) & 'Postal.Longitude'; Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'POSTCODE', 'Postal.Latitude', 'Postal.Longitude' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'PostalData'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosedInlineTable] = [EnclosedInlineTable] & chunkText; [WithinAssociations]: SQL SELECT [POSTCODE], [ClubCode] FROM Within(enclosed='Enclosed', enclosing='Enclosing') DATASOURCE Enclosed INLINE tableName='PostalData', tableFields='POSTCODE,Postal.Latitude,Postal.Longitude', geometryType='POINTLATLON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosedInlineTable)} DATASOURCE Enclosing INLINE tableName='TravelAreas5', tableFields='ClubCode,Car5mins_TravelArea', geometryType='POLYGON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosingInlineTable)} SELECT [POSTCODE], [Enclosed_Geometry] FROM Enclosed SELECT [ClubCode], [Car5mins_TravelArea] FROM Enclosing; [EnclosedInlineTable] = ''; [EnclosingInlineTable] = ''; Next chunkText='' /* End Idevio GeoAnalytics operation Within ----------------------------------- */Example, a AddressPointLookup operation

Before the edit

The code as the connector produces it:

/* Generated by GeoAnalytics for operation AddressPointLookup ---------------------- */ Let [DatasetInlineTable] = 'id' & Chr(9) & 'STREET_NAME' & Chr(9) & 'STREET_NUMBER'; Let numRows = NoOfRows('data'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'id', 'STREET_NAME', 'STREET_NUMBER' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'data'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [DatasetInlineTable] = [DatasetInlineTable] & chunkText; Next chunkText='' [AddressPointLookupResult]: SQL SELECT [id], [Dataset_Address], [Dataset_Geometry], [CountryIso2], [Dataset_Adm1Code], [Dataset_City], [Dataset_PostalCode], [Dataset_Street], [Dataset_HouseNumber], [Dataset_Match] FROM AddressPointLookup(searchTextField='', country='"Canada"', stateField='', cityField='"Toronto"', postalCodeField='', streetField='STREET_NAME', houseNumberField='STREET_NUMBER', matchThreshold='0.5', service='default', dataset='Dataset') DATASOURCE Dataset INLINE tableName='data', tableFields='id,STREET_NAME,STREET_NUMBER', geometryType='NONE', loadDistinct='NO', suffix='', crs='Auto' {$(DatasetInlineTable)} ; [DatasetInlineTable] = ''; /* End GeoAnalytics operation AddressPointLookup ----------------------------------- */After edit

The header and the call is moved inside of the loop. chunkSize decides how big each split is.

/* Generated by GeoAnalytics for operation AddressPointLookup ---------------------- */ Let numRows = NoOfRows('data'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let [DatasetInlineTable] = 'id' & Chr(9) & 'STREET_NAME' & Chr(9) & 'STREET_NUMBER'; Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'id', 'STREET_NAME', 'STREET_NUMBER' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'data'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [DatasetInlineTable] = [DatasetInlineTable] & chunkText; [AddressPointLookupResult]: SQL SELECT [id], [Dataset_Address], [Dataset_Geometry], [CountryIso2], [Dataset_Adm1Code], [Dataset_City], [Dataset_PostalCode], [Dataset_Street], [Dataset_HouseNumber], [Dataset_Match] FROM AddressPointLookup(searchTextField='', country='"Canada"', stateField='', cityField='"Toronto"', postalCodeField='', streetField='STREET_NAME', houseNumberField='STREET_NUMBER', matchThreshold='0.5', service='default', dataset='Dataset') DATASOURCE Dataset INLINE tableName='data', tableFields='id,STREET_NAME,STREET_NUMBER', geometryType='NONE', loadDistinct='NO', suffix='', crs='Auto' {$(DatasetInlineTable)} ; [DatasetInlineTable] = ''; Next chunkText='' /* End GeoAnalytics operation AddressPointLookup ----------------------------------- */